The World Wide Web ~ Concise and Simple

(As with all "concise and simple" articles I assume no prior knowledge of the subject and keep the length to less than 1500 words.)

The World Wide Web (or just "the web") is a global hypertext system invented by Sir Tim Berners-Lee in 1989. The web is not the Internet. Rather, it is an application that sends messages via the Internet. You're using it now!

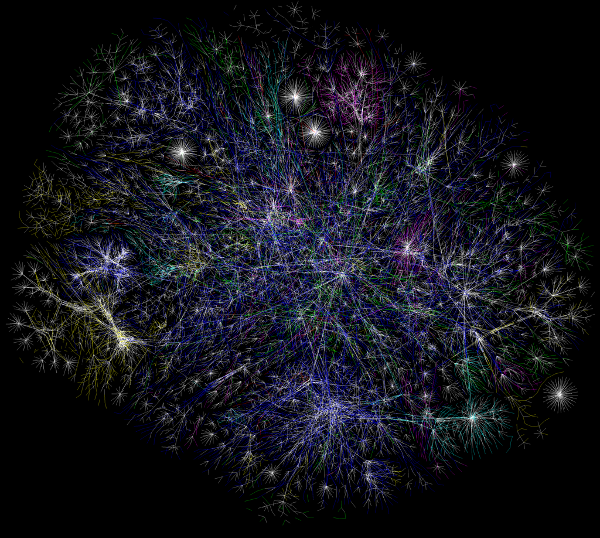

Hypertext is the core concept of the web: pages containing text and other media reference each other via so-called hyperlinks. In this way, pages connect to form a nebulous web of relationships. Hypertext was coined in the 1960s by Ted Nelson although the basic concept dates back to Vannevar Bush's 1945 article, As We May Think where he describes an intriguing device called the Memex (MEMory indEX). Various pre-web hypertext systems were developed in the 1960s, 70s and 80s, but it was only until the early 1990s that Berners-Lee's version of hypertext became popular.

The web is made with these concepts:

- Page - a document on the web. Web pages can contain text, images, sound, video and forms (to facilitate limited interactivity).

- Hyperlink - something in a page that, when activated, takes the user to another page on the web. Often abbreviated to "link".

- URL - a uniform resource location identifies a page or other resource on the web.

- Browser - software on the user's computer that downloads web pages, displays them and handles user interactions (such as filling in a form or clicking a link).

- Server - a device on the Internet that runs software to deliver web pages and other resources to browsers.

- HTTP - HyperText Transfer Protocol describes how browsers and servers interact.

- HTML - HyperText Markup Language defines the structure of web pages identifying such things as paragraphs, links and headings.

- CSS - Cascading Style Sheets describe how a page should look by defining layout, colour scheme, typesetting and other presentation information.

- Javascript - a ubiquitous programming language for browsers. It defines the behaviour of a page beyond the basics of clicking links or submitting forms.

In the early days of the web HTML was the only way to create pages (CSS and Javascript were later developments). The essence of HTML has not changed over the years:

HTML is written as a series of elements. Elements are defined with

named tags between less-than (<) and greater-than

(>) symbols. The scope of an element is defined by an opening and

closing tag. For example, a heading is defined by the

h1 element:

<h1>Hello</h1>The closing tag is just like the opening one apart from the prepended back-slash (/). The tags are a balanced pair: the scope of the heading element is defined as the text "Hello" and displayed as:

Hello

Elements can contain other elements but are not allowed to overlap:

<p>this is <strong>valid</strong></p>but,

<p>this is <strong>not</p></strong>HTML has a certain structure: two elements, head and

body, are contained within an html element.

Information about the document is expressed by elements within the

head tags. Elements within the body

tags define the structure of the page. For example:

<html>

<head>

<title>My Web Page</title>

<link rel="stylesheet" href="/static/css/styles.css"/>

<meta name="author" content="Nicholas H.Tollervey"/>

</head>

<body>

<h1>Welcome!</h1>

<p>This is some text enclosed within a paragraph tag.

I can link to <a href="http://ntoll.org/">other docs</a>

using an anchor tag.</p>

</body>

</html>HTML is currently at version five (HTML5). Actually, HTML5 is a collection of standards. Some add capabilities for embedding media while others add new tags. HTML5 colloquially refers to specifications for new browser behaviour too, such as local data storage.

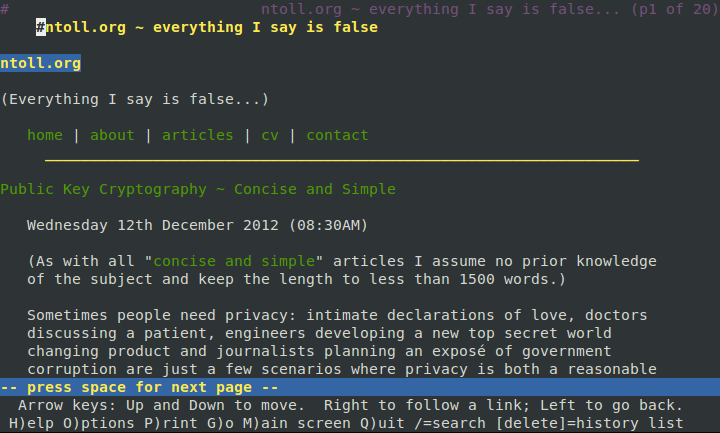

Browsers were originally simple and merely text based applications, as shown in the screenshot below. Despite these shortcomings the structure of the page is very clear and easy to read (hyperlinks are green).

The web "happens" in two places: the client's browser and the website's server.

Clicking a link or typing in a URL makes your browser send a request over the Internet using HTTP (as described here). The server identified within the URL responds with HTML for the web page, CSS describing how to display it and any Javascript needed for the page to function correctly.

Before rendering the page to the screen the browser transforms the HTML in to the document object model (DOM). The DOM is a data structure used to match HTML elements with CSS rules. Furthermore, snippets of Javascript run at certain points in the page's existence and manipulate the DOM as events occur (such as when the page finishes loading, a button is clicked or a form is submitted).

As you interact with the browser it repeats this process to fetch new pages from the websites you visit. Sometimes your browser posts information back to a website (when you fill in a form). The website responds with a page that reflects the information you submitted.

This happens within the realm of your "client" computer. However, an equally interesting story happens on the server.

In the simplest case web pages are static so the server responds with pre-existing content. However, many websites are dynamic and allow you to change them on-the-fly. For example, as you browse a shopping website you may click buttons that add items to a virtual shopping basket. If you visit the web page that represents your basket you expect it to be up-to-date and list the correct number of items.

For this to happen several things must be in place.

The server should know who you are. You are authenticated in some way, usually with a username and secret password, and authorised to interact with certain pages. For example, only you should be able to view and change your settings. Furthermore, the server must not confuse you with other users, so your interaction with the website is associated with a unique session linked to your identity so the server can track who is making requests.

The server should keep track of state. When you add an item to a shopping cart this should be reflected in the way the website behaves. Such state is stored in a database "backend".

The HTML should reflect the state stored in the database. This is

achieved with

templates:

snippets of HTML combined with data specific to the client.

Rendering templates to HTML is "frontend".

The following snippet is for a simple shopping basket (anything enclosed

within {% raw %}{% %}{% endraw %} is replaced):

{% raw %}<h1>Hi, {% user.username %}!</h1>

<p>Your shopping cart contains:</p>

<ul>

{% for item in user.cart %}

<li>{{item.name}} - {{item.description}}</li>

{% endfor %}{% endraw %}

</ul>

The server should be reliable. Between the front and back ends is a logic layer checking requests, interacting with the database and ensuring the correct HTML is served. This needs to work correctly (imagine if, when clicking a "buy this item" button, a website added two items rather than one).

The server should perform well. A website should quickly handle a great many requests at once. Two common ways to achieve good performance are caching (store and re-use things that take time to compute but don't change that much over time) and load balancing (route incoming calls to a pool of servers so the workload is shared). Popular websites consist of many servers handling specific tasks (such as the database, rendering templates or the logic) hidden behind layers of load balancers and caches.

If this sounds complicated, that's because it is. However, the description given above is a simplification of the amazing engineering that takes place to display a web page on your screen.

However, all is not well.

Browsers implement web-standards in different ways: Internet Explorer is famous for getting things wrong. HTML and CSS are more complex with each revision - gone are the days of a beautifully simple hypertext system. Competing technical standards are promoted for commercial rather than engineering reasons and some are so complex they're practically useless. Alas, Javascript is hopelessly unintuitive and provides developers with plenty of rope with which to hang themselves.

Finally, there are political problems.

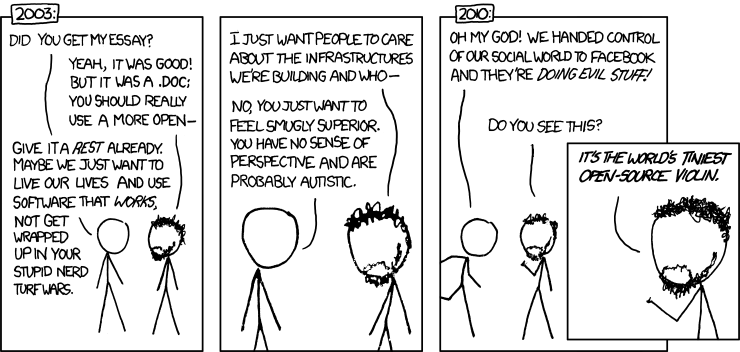

You can't publish on the web unless you know how to set up a website or you use a third party service such as a social network. Social networks are hugely popular but turn the web into a handful of walled gardens - the antithesis of the decentralised early web. Furthermore, such websites track and analyse user behaviour and sell it (via advertising). They also provide services for storing user's digital assets (in order to harvest yet more data to sell). As has been pointed out before, this is deeply worrying.

Ultimately, it is a question of who controls the infrastructure. Many users are happy to sacrifice privacy and control for ease of use and convenience. Yet I wonder how many realise that they are tracked and influenced by only a handful of very powerful technology companies.

What of the future? I like to think that William Gibson was right when, in 1996 he said,

[T]he World Wide Web [is] the test pattern for whatever will become the dominant global medium.

(Who knows what will happen next?)

1486 words. Image credit: © 2005 opte.org under a Creative Commons license. Image of Lynx browser © 2012 by the author. "Infrastructure" cartoon © Randall Munroe under a Creative Commons license.